Axiomatic Intelligence: When the AI Cannot Afford to Guess

AI agents are about to make decisions on your behalf. They read the same polluted web you already distrust.

Part 3 of a three-part series on Axiomatic Intelligence, a post-probabilistic architecture for the age of noise.

In Part 1 of this series, we diagnosed the disease. The internet has collapsed into a recursive loop of marketing summaries citing marketing summaries. AI trained on this pollution reproduces it fluently. We called this the Beige Singularity: the convergence of all information toward a single, confident, wrong answer.

In Part 2, we showed the architecture that fixes it. A structural separation of truth from revenue called the Fiduciary Diode. An adversarial process that collides marketing claims against physical reality. A cryptographic receipt that proves the system worked as designed.

We built this for commerce first. We proved it works on espresso machines and running shoes and frying pans, where a bad recommendation costs you $400 and a weekend wondering whether you made the right call.

But the architecture is not about espresso machines. The architecture is about what happens when AI cannot afford to be wrong.

The Wrong Prescription

You ask an AI about a drug interaction. It summarizes WebMD. WebMD summarized a pharmaceutical press release. The press release emphasized efficacy and buried the contraindication data in paragraph nine. The AI tells you the medication is “generally well tolerated.” You take it with your existing prescription. The interaction was documented in the clinical literature. The AI never mentioned it.

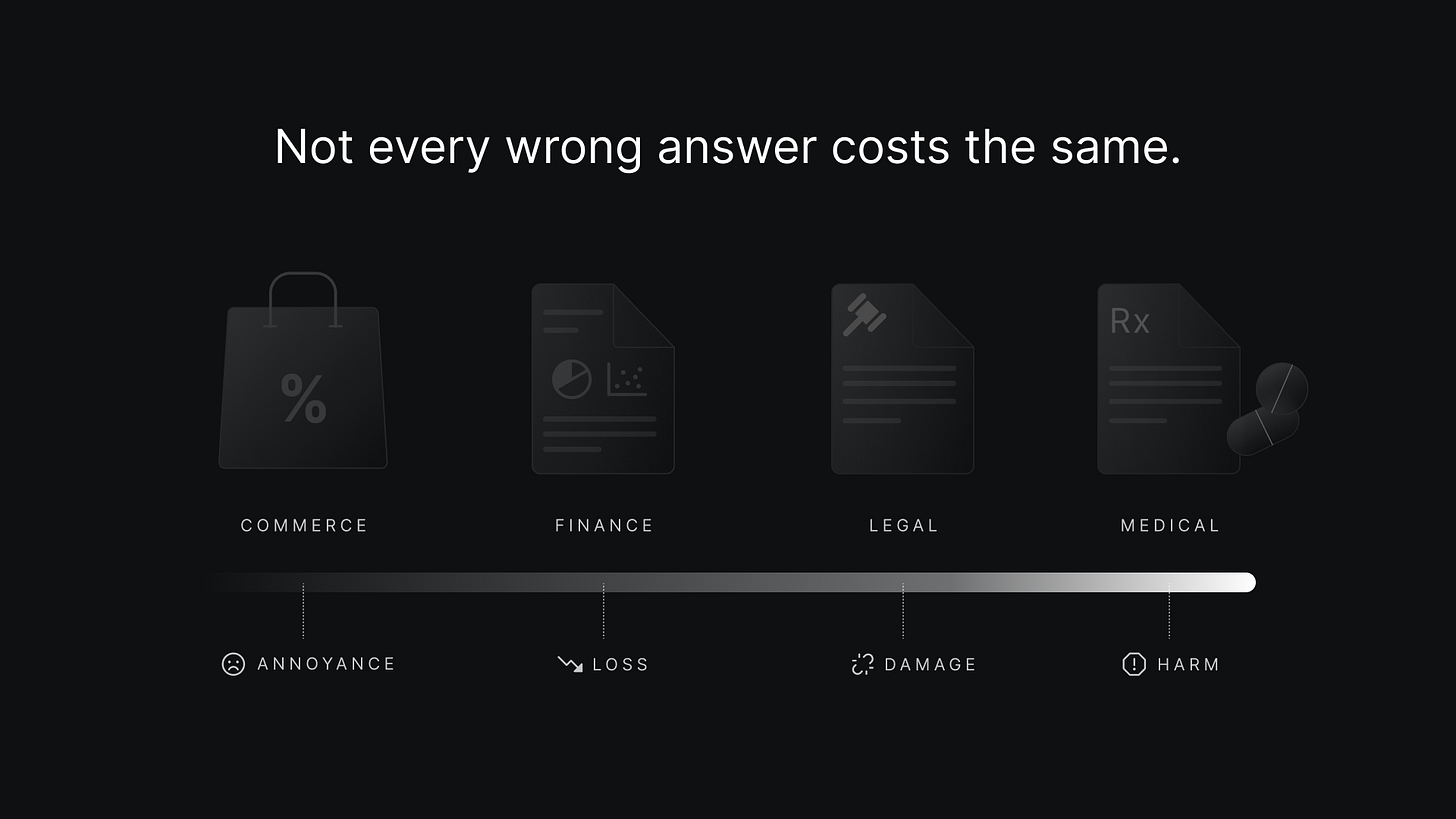

This is not hypothetical. This is the same architecture that recommended the Breville to us in Part 1 of this series. The same probabilistic averaging. The same Receipt Cosplay. The same confident summary built on a foundation of nothing. The only difference is the cost of being wrong.

Consider legal advice. You ask an AI whether your landlord can withhold your security deposit. The AI summarizes tenant law from twelve states and produces a confident average. The average does not apply to your state. The specific statute that protects you, the one buried in a 2019 amendment that most legal blogs have not indexed, does not appear in the summary. You accept the loss. The protection existed. The AI could not find it because finding it required adjudication, not summarization. It required knowing which jurisdiction governs, which statute applies, and whether the statute has been amended since the training data was collected.

Consider financial decisions. You ask an AI whether to refinance your mortgage. It summarizes articles written during a different rate environment. The articles were SEO optimized to capture “should I refinance” traffic. They recommend refinancing because that recommendation drives affiliate revenue for mortgage brokers. The AI reads this as consensus. It is not consensus. It is an economic incentive wearing the skin of advice.

The pattern is identical across every domain. Probabilistic summarization produces fluent, confident, wrong answers. The only variable is the severity of the consequence. Commerce is where we proved the fix works. Healthcare, law, and finance are where the fix becomes even more urgent.

A Million Machines, One Wrong Answer

This becomes critical when you understand what is about to happen.

Right now, you are the one asking the AI. You read the answer. You apply your judgment. You catch some of the errors. You open the 15 tabs. You do the work.

That is about to end. AI agents are proliferating across every surface of your life. Your phone has one. Your email client is getting one. Your car will have one. Your refrigerator, your thermostat, your enterprise procurement system, your health insurance portal will each have an embedded AI agent making decisions on your behalf. These agents will not open 15 tabs. They will not read the Reddit thread. They will not apply your judgment. They will query a model, receive a probabilistic summary, and act on it. Automatically. At scale. Without asking you.

Every one of those agents faces the same Beige Singularity we diagnosed in Part 1. They are all trained on the same polluted web. They all summarize the same noise. They all reproduce the same biases. But now they are acting on those biases without human review. Your refrigerator orders groceries based on a product recommendation generated by averaging affiliate content. Your insurance portal triages a claim based on a summary of pharmaceutical marketing dressed as clinical guidance. Your procurement system selects a vendor based on the vendor’s SEO budget rather than their delivery reliability.

The Four Commitments

In Parts 1 and 2, we showed specific implementations. The Fiduciary Diode. The ARC Protocol. The Proof Packet. Those are our solutions. They are instances of a more general pattern.

Axiomatic Intelligence adjudicates claims against reality rather than summarizing consensus. It continuously refines verified truth through adversarial processes and serves it at runtime, as opposed to generating probabilistic outputs from static training data.

This is a category, not a product. It is a paradigm with specific commitments. Any system that meets these commitments is practicing Axiomatic Intelligence. Any system that does not is practicing Probabilistic Intelligence, no matter how sophisticated its models. The commitments are four. Remove any one and the system collapses back into probabilistic slop.

1.) Kinetic refinement over static storage. Truth decays. A product review written six months ago may no longer reflect reality. A firmware update fixes the overheating problem. A new batch introduces a manufacturing defect. A price that was competitive in January is overpriced by March. Static knowledge bases rot. They store facts and forget them. A system that verified a claim in October and serves it unchanged in February is not serving truth. It is serving a memory of truth.

Axiomatic Intelligence treats knowledge as alive. Every verified claim, what we call a Kinetic Axiom, carries a decay rate and explicit mutation triggers. The decay rate represents how quickly this type of truth goes stale. Physical specifications decay slowly. Prices decay rapidly. Sentiment decays at medium rates. The mutation triggers define the specific events that force reverification: a firmware release, a price movement, a sentiment shift, a contradictory signal from transaction data.

The system does not reverify on a fixed schedule. It does not wait for someone to ask. It watches for signals that reality has changed and reverifies when the signals fire. We call this Signal-Gated Compute: expensive verification triggered by market signals, not by clocks or queries. The truth stays fresh.

2.) Adversarial verification over summarization. Truth is not found by averaging. It is found by forcing conflicting evidence into direct confrontation and seeing what survives. We described this process in Part 2 as the ARC Protocol, where four vectors collide to produce verdicts: marketing claims against physical measurements against user consensus against transaction data.

The specific vectors differ by domain. In healthcare, the collision might be pharmaceutical claims versus clinical trial results versus patient reported outcomes versus insurance claims data. In law, it might be statutory text versus judicial interpretation versus practitioner commentary versus case outcome data. The principle is constant. A claim that collapses under adversarial pressure was never true. A claim that survives from multiple independent vectors has earned its confidence score. Collision, not aggregation.

3.) Private signal over public data. The public web is gameable. Marketing can be manufactured. Reviews can be faked. SEO can be purchased. Any verification system that relies exclusively on public data is vulnerable to the same pollution that created the Beige Singularity in the first place. Axiomatic Intelligence requires a calibration layer, a source of signal generated by real behavior rather than published content.

In our implementation, this is what we call The Ore: proprietary transaction data from millions of commerce events. Return rates. Cart abandonment patterns. Support conversations. When a user initiates a return, that is a costly signal. They paid money, received the product, used it, and decided it was not worth keeping. You cannot fake that at scale. The private signal overrules public data when they conflict. Costly signals outweigh cheap ones. Without this calibration layer, even an adversarial system risks becoming a sophisticated hallucination engine, one that litigates public claims against other public claims without ever touching ground truth. Behavior beats opinion.

4.) Cryptographic alignment over policy commitment. Every output must come with a verifiable receipt showing which inputs were visible, which evidence was consulted, and how the conclusion was reached. Every recommendation system claims to be unbiased. None of them prove it. The claim is unverifiable by design. Users must trust the system’s promises about its own alignment.

Axiomatic Intelligence makes alignment provable. Not through policy commitments, but through structural constraints that are mathematically verifiable. If the ranking system cannot see revenue data, it cannot optimize for revenue. This is not a promise. It is a fact about the architecture. And the proof is generated for every output. If the structural separation was bypassed, the receipt reveals it. If the adversarial process was skipped, the receipt reveals it. Auditability is the immune system. Without it, the other three commitments are claims. With it, they are facts. The proof is the product.

Verdicts, Not Vibes

Probabilistic Intelligence asks: “What is the most likely output given my training data?”

Axiomatic Intelligence asks: “What do we actually know to be true, and how do we know it?”

The first question produces consensus. The second produces verdicts. They are not the same thing.

The output of this process is the Kinetic Axiom: a living truth object that carries a claim, a confidence score, an evidence trace showing how the claim was verified, a decay rate tracking how fast it goes stale, and mutation triggers that force reverification when reality changes. Not a summary. A verdict with a receipt.

The tradeoff is real. Probabilistic Intelligence scales easily. Axiomatic Intelligence scales expensively. You cannot run the adversarial collision on every query in real time. The compute cost is prohibitive. This is why the architecture is a refinery, not a chatbot. The adversarial work happens offline, continuously, category by category, claim by claim, signal by signal. The results are stored as Kinetic Axioms. When an agent queries the system, it receives a precomputed verdict in milliseconds. The hard work was done yesterday. The answer is served today.

The Invitation

The tools of Axiomatic Intelligence (Quad-Vector Collision, Signal-Gated Compute, Neuro-Symbolic Adjudication, Cryptographic Diodes) are applicable wherever truth is contested and noise is abundant. Healthcare, law, finance, scientific literature, procurement: the principles transfer. We start with commerce because it is a domain we understand deeply, where we have accumulated proprietary signal that cannot be replicated, and where the economic model for monetizing verified truth is clear. We have been building verification infrastructure for 16 years. SimplyCodes processes over a billion dollars in annual transaction value using these very principles.

The Beige Singularity is not inevitable. It’s a consequence of treating information as a distribution to be averaged. A different paradigm produces different outcomes. We think adjudication is that paradigm. The work is underway. You can check out the full architecture in our whitepaper on GitHub.