60% of Promo Codes Are Dead. You Pasted One at Checkout Last Week.

Publishing codes is free. Adjudicating which ones actually work took fifteen years.

You pasted a promo code at checkout. It failed.

You know this ritual. You built the cart. You entered your payment details. You were one click from done. Then you saw the field, “Have a promo code?”, and you did what any reasonable person would do. You tried to save twelve dollars.

New tab. “[store name] promo code.” First result. Copy. Paste. Nothing. Back to the search results. Another site, another code. This one from 2023. Another site. This one wants you to install something. Another. Says “applied” but the price did not change.

Ten minutes gone. You feel stupid for trying. You close all the tabs and buy at full price, carrying a low-grade suspicion that you just overpaid.

This is the Checkout Crucible. It happens millions of times a day. The cost is not the twelve dollars. The cost is the ten minutes of your life spent clicking through recycled garbage. The learned helplessness. The erosion of trust at the exact moment you were ready to buy.

I spent fifteen years building SimplyCodes and, underneath it, the system that adjudicates this chaos. I traced the physics of why it keeps happening. The answer is not that codes are unreliable. The answer is structural.

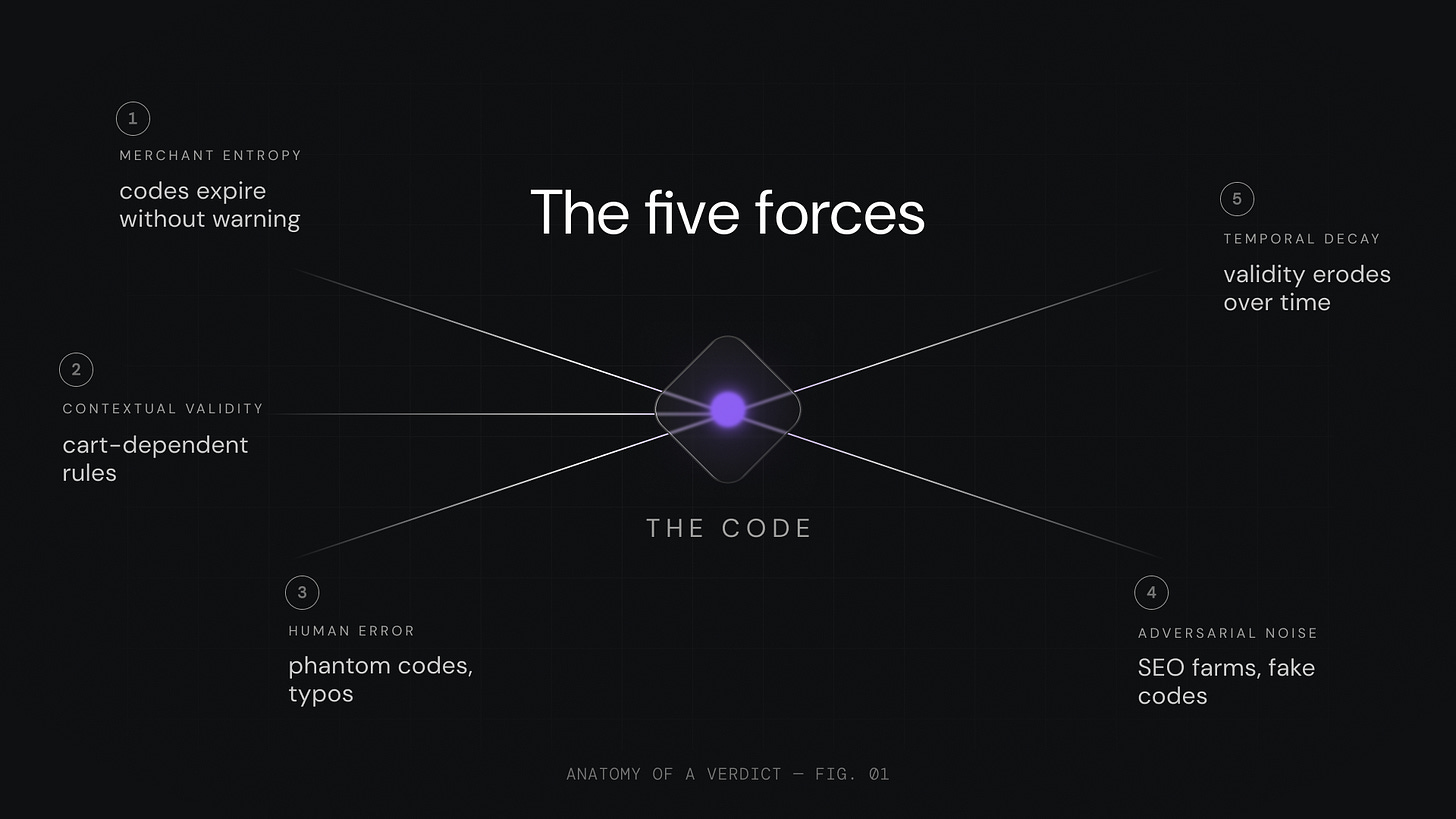

Forty to sixty percent of promo codes on the public internet are dead, restricted, or misleading. Not one problem. Five.

Five Forces That Make Coupon Codes a Chaos Engine

These five forces compound. Each one would be manageable alone. Together, they make the default state of any coupon code untrustworthy.

Merchants expire codes with no announcement. A code that worked Monday dies Tuesday. No changelog, no notification, no public record. A flash sale code lives six hours. A seasonal code lasts weeks. An evergreen welcome offer survives months. The decay is invisible until the moment of failure.

The same code produces different results depending on your cart. “SAVE20” works. Unless you have sale items, unless your subtotal is under fifty dollars, unless you are not a new customer. The code exists. It does not apply to you. You cannot know this without testing it against your specific checkout.

Human error creates phantom codes. Merchants fat-finger expiration dates. Affiliates copy codes with typos. One wrong character creates a phantom code that haunts the ecosystem for months.

Affiliates republish stale codes for revenue. The business model of most coupon sites is simple. Aggregate codes, publish them, earn affiliate commission when someone clicks through. Whether the code works is irrelevant. The click is the product, not the code.

SEO farms publish codes that never existed. The incentive structure of the internet actively rewards pollution. Publishing a page titled “[Store Name] Promo Code 2026” captures search traffic regardless of whether a valid code exists. The page is the asset. Truth is optional.

Five forces. One result. The default state of the coupon ecosystem is chaos. And the uncomfortable reality is that no one in the industry had ever published how they separate signal from noise. Most do not separate it at all. They aggregate and republish.

We decided to change that. We published how the machine works, where it breaks, and what we still cannot do.

Verification Is an Engineering Problem, Not a Data Problem

The instinct is to think coupon verification is a data collection exercise. Scrape enough codes, update them fast enough, problem solved.

It is not.

The five forces above are not a data problem. They are an adversarial environment. Codes decay at variable rates. Merchants change their systems without warning. The same code is simultaneously true and false depending on cart context. The ecosystem is polluted with codes that were never real.

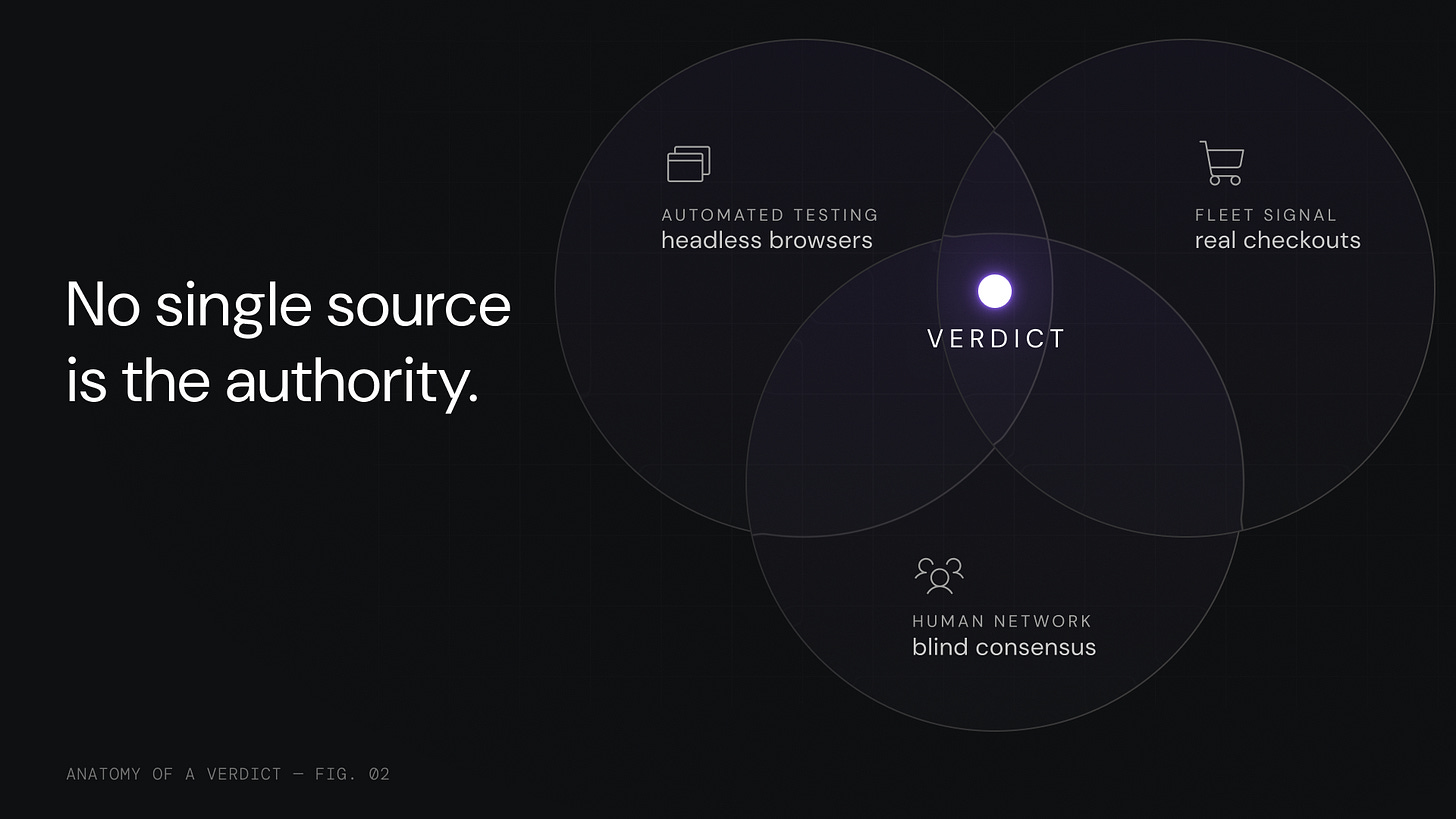

This is structurally identical to a problem in distributed systems engineering called Byzantine Fault Tolerance. BFT assumes any single node in a network may be lying, malfunctioning, or compromised. You do not trust any one source. You design the system so truth can only emerge when independent signals converge.

We applied this to promo codes.

A bot test can miss an edge case. A human verifier can make an error. A user report can be wrong. No single layer is the authority. The verdict comes from all three.

Automated Code Testing

Headless browsers across 515,000 merchants. Tens of thousands of tests every day. Not scrapers. They navigate real storefronts, add products to carts, apply codes, and capture what happens. Exactly as you would.

Different merchants require different approaches. For major e-commerce platforms with semi-standardized checkouts, we build deterministic adapters. They know exactly where the promo field lives, how to read the cart response, what success and failure look like. Thousands of merchants run on a handful of platforms. This covers a large portion of online retail.

For custom or less common platforms, the system uses heuristics. It scans for common checkout patterns. It locates input fields, diffs the page after code entry, detects price changes or error messages.

For truly custom flows where heuristics fail, we deploy an LLM to read the page as a human would. The bot captures the checkout DOM and asks: Where is the promo code input? What changed after entry? What error appeared? This agentic layer handles arbitrary checkout flows without pre-built adapters.

We pick the cheapest, most reliable method for each merchant and fall back to more expensive intelligence only when needed.

Now here is something competitors will not tell you. We will.

Our automated tests reliably answer “Does this code exist and function in the merchant’s system?” They do not yet answer “Will this code work for your specific cart?” A code can pass our test and fail for you if you have sale items or do not meet a minimum spend. We name this because transparency about what we cannot do is part of the architecture.

A second honest disclosure. We verify existence, not arithmetic. Confirming that “20% off” actually produces a 20% discount on your specific cart total is harder than it sounds. Merchants calculate discounts differently. Display results differently. We catch existence and function. Arithmetic verification is an active build.

And a third. Merchants actively resist verification. Bot detection, CAPTCHAs, dynamic checkout flows, anti-automation defenses. When automated testing is blocked entirely, the multi-layer architecture catches what bots cannot. That is the point of the stack.

The Human Network

Bots simulate checkouts. They cannot reason about edge cases.

We maintain a global network of tens of thousands of trained human verifiers. Millions of monthly actions. Real people testing real codes on real checkouts.

This is not a contractor pool. It is a reputation economy. Contributors build trust scores based on accuracy over time. Get it right consistently and your vote carries more weight. Get it wrong and the system downgrades you. The incentive is accuracy, not volume.

The consensus protocol enforces independence. Blind voting. Verifiers cannot see each other’s assessments until consensus is reached. No herding. No groupthink. Every judgment is genuinely independent. We borrowed the consensus architecture that secures distributed systems and applied it to promo codes.

Killing a code requires multiple independent “no” votes within a short window. A single bad actor or mistaken verifier cannot poison the database. Validating a code has a lower threshold. A single high-trust verifier with strong evidence can boost confidence significantly. The asymmetry is intentional. False positives self-correct because users encountering dead codes quickly submit negative signals.

Every submission requires screenshot proof. The screenshot must show the cart, the discount or error message, and identifiable merchant branding. This evidence becomes part of the code’s permanent audit trail.

The architecture enforces a hard separation between witness and judge. Our tooling does not ask “did it work?” It parses the checkout DOM to capture full cart context. Items, prices, subtotal, merchant response. It packages this into what we call a sensing event. A structured particle of evidence. Not a conclusion. Not an opinion. Raw observation.

Witnesses capture what happened. Judges compute truth from accumulated observations. These roles never blur.

Fleet Signal

When a real user with our browser extension checks out and the code works, that is ground truth. Not a simulation. Not a test environment. A real person spent real money and received a real discount.

We capture cart composition at the moment of code entry, exact discount amount, merchant response, success or failure state, timestamp, and session metadata.

This creates a flywheel. More users generate more real-time signal. Better signal produces more accurate scores. More accurate scores build trust. More trust attracts more users. Each rotation deepens the moat.

This is the moat that cannot be purchased. A competitor can build bots. A competitor can hire verifiers. They cannot conjure years of accumulated real-world transaction signal from millions of actual checkouts.

Three layers. Independent signals. Convergent verdicts.

How the System Reaches a Verdict

Every code carries a Health Score, a confidence measure that this code will work right now. A freshness-adjusted trust rating derived from all three layers.

The score is not static. It decays because codes rot. It is not stored as a fixed value in a database column. It is computed from an event stream. Every bot test, every human vote, every real checkout is a sensing event. The Health Score is a live computation over the full history of these events. Not a number in a row. A running verdict.

This means we can recompute any code’s health at any point in time, trace exactly why a score changed, and audit the full evidence chain behind any verdict.

Seven inputs feed the score.

Freshness. When was this code last verified? Outcome. Did the last test succeed or fail? Verifier trust. Weighted human consensus accounting for each contributor’s accuracy and tenure. Automated test confidence. Result reliability from our bot infrastructure. Merchant behavioral patterns. Over fifteen years, we have built profiles for hundreds of thousands of stores. We know which ones expire codes without warning, which have buggy cart logic, which code formats each retailer uses. Decay clock. Time-based confidence erosion matched to the code type. Fleet signal. Real-world confirmations from extension users.

Every observation traces through six forensic dimensions. Who observed it, what code was tested, which merchant, what software captured it, which build version, and a session identifier linking observation to verdict. Any conclusion can be audited from verdict back to raw evidence.

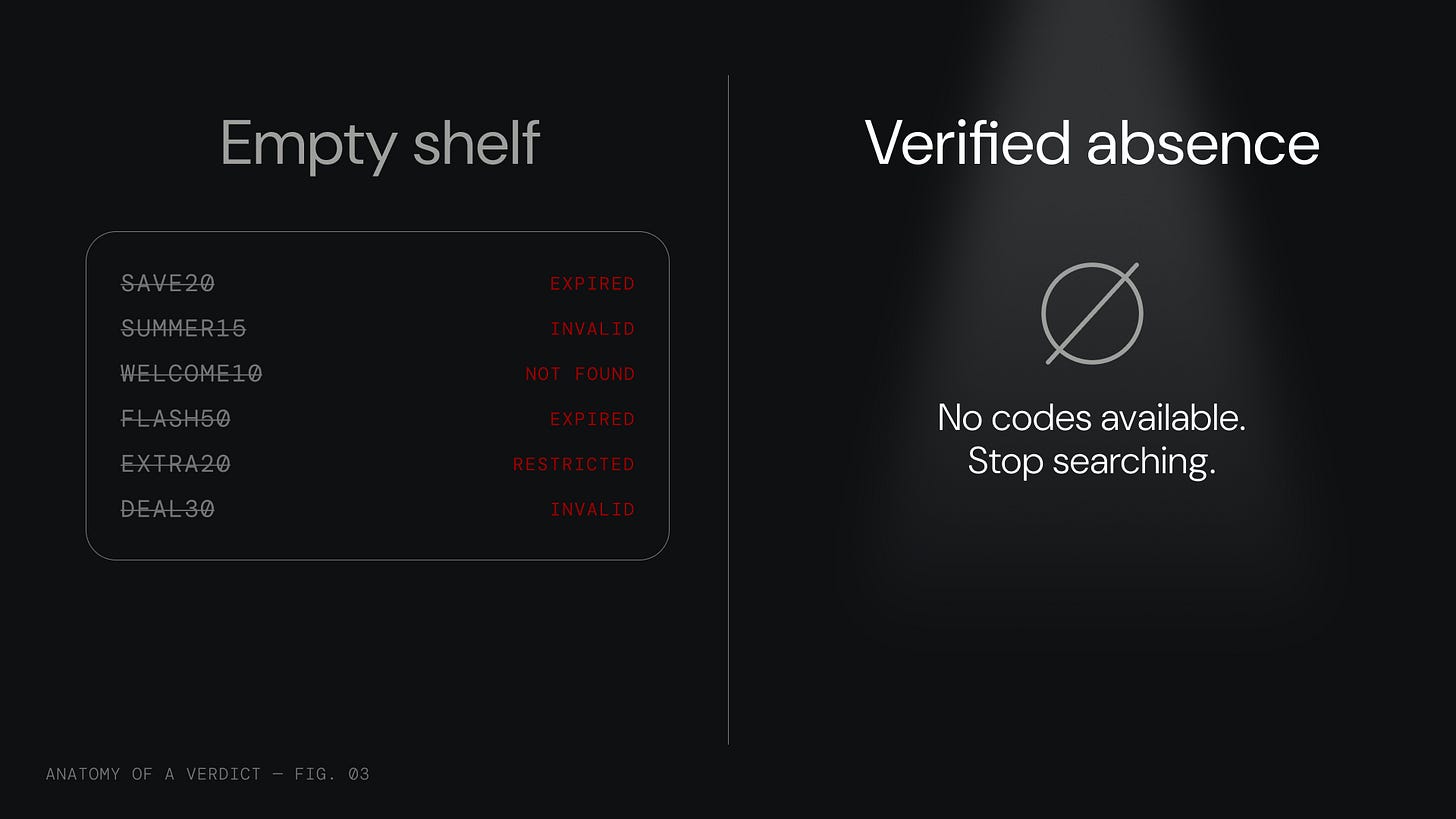

The Confident No

Most coupon sites optimize for “yes.” They want to show you codes. More codes, more clicks, more affiliate revenue. The incentive is volume, not truth.

We optimize for something different.

Closure.

When our system returns “no verified codes available,” that is not a failure. It is a verdict backed by evidence from all three layers.

We tested this merchant with automated systems. We checked human verifier submissions. We analyzed real-time extension signal. The conclusion. There is nothing to find. Stop searching. Buy with confidence.

Think about what that sentence is worth. You were about to spend ten minutes clicking through dead links and recycled garbage. Ten minutes of your life to arrive at the same conclusion our system reached in seconds. The Confident No hands you that time back.

The time saved is the product. The anxiety eliminated is the product. The cognitive closure, the permission to stop and act, that is what you hire us for.

The most valuable thing we can tell you is that there is nothing to find.

That sentence contains our entire product philosophy. Every coupon site in the industry is afraid to tell you “we have nothing.” Showing nothing feels like failure. Showing something, anything, even a dead code from 2019, feels like value.

It is not value. It is noise dressed as signal. It is why you spent ten minutes in the Checkout Crucible.

We built a three-layer architecture to earn the right to say “there is nothing” with evidence. That is the difference between an empty shelf and a verified absence. One is negligence. The other is a verdict.

The Evidence

515,000 merchants covered. Roughly ten times the typical competitor.

In 2022, the independent testing firm Testbirds tested 500 stores across multiple coupon providers. SimplyCodes had working codes for 334. A 96% coupon availability rate. The next closest competitor reached 138 stores. Competitor availability ranged from 34% to 44%.

Tens of thousands of automated tests every day. Tens of millions of human verdicts every month. Over fifteen years of accumulated merchant behavior patterns.

Three structural moats protect this position.

First, time-accumulated signal. We know which merchants expire codes without warning, which stores have buggy cart logic, which code formats each retailer uses. Fifteen years of behavioral data. A competitor starting today starts at zero.

Second, the human network. Tens of thousands of verifiers with trust scores, accuracy histories, and invested precision. You cannot assemble this overnight. You can hire contractors. You cannot manufacture years of calibrated judgment.

Third, the edge case corpus. Our codebase is scar tissue from every strange merchant behavior we have encountered over fifteen years. The merchant who hides the promo field behind a JavaScript hover event. The checkout that requires cookies from a specific referrer. The code that only works if you have exactly three items in cart. The flash sale that activates four minutes late because of CDN caching. This corpus cannot be replicated without experiencing the failures firsthand.

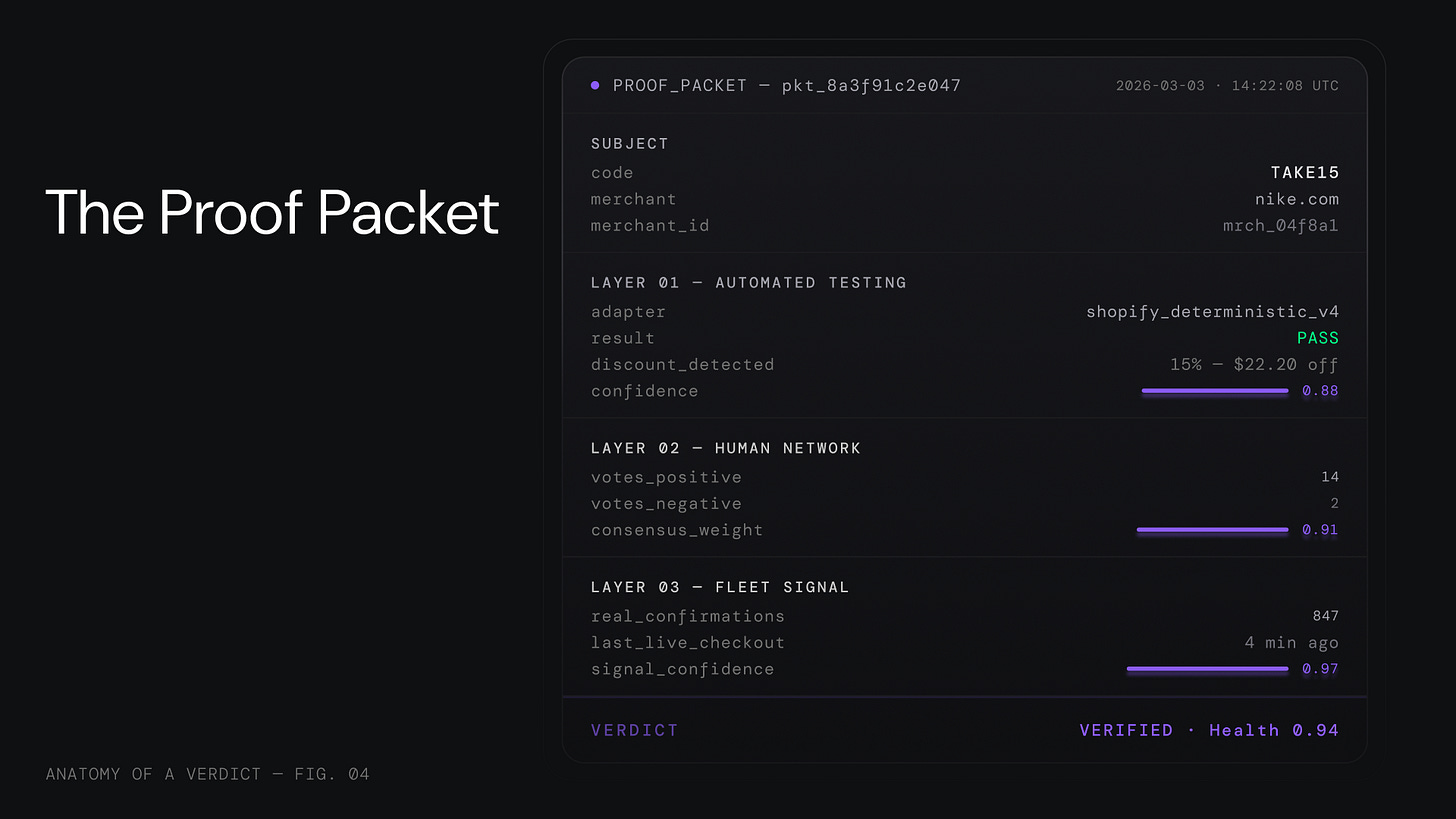

The Proof Packet

Every verdict generates what we call a Proof Packet, a structured evidence bundle documenting exactly how the conclusion was reached. Automated test results, human consensus data, fleet signal, timestamps, confidence tiers. One auditable package.

This is not just internal tooling. The Proof Packet is what we serve to AI agents.

When your AI assistant says “I found a 20% discount at Nike,” it needs to answer. Is this code real? Will it work for this cart? What is the confidence level? What is the evidence? That is not a coupon lookup. That is a verification API call. The Proof Packet is the answer.

What Comes Next

We built this system for humans clicking on websites. The next generation is for AI agents executing transactions.

We are building the Applicability Engine, moving from “does this code exist?” to “will this code work for this specific cart?” And we are building agent-native endpoints where the Proof Packet becomes infrastructure for machine-to-machine commerce.

In a world of AI-generated noise, the ability to say definitively “there is nothing to find” becomes the scarcest resource.

We do not sell codes. We sell verdicts.

We published the full machine. Read the whitepaper on GitHub.

P.S. This whitepaper is the applied proof of the Axiomatic Intelligence paradigm we published last month. AxI describes the theory. Anatomy of a Verdict shows it working in production. Both are open. Both are on GitHub.