The Beige Singularity: How the Web Poisoned AI—and AI is Poisoning It Back

The internet promised to make shopping easier. It made it into a part-time job.

Part 1 of a three-part series on Axiomatic Intelligence, a post-probabilistic architecture for the age of noise.

I bought the Breville Barista Express because ChatGPT told me to. Four-and-a-half stars. Amazon’s Choice. “The Breville Barista Express is widely regarded as the best entry-level espresso machine for home baristas.” I read that sentence. I believed it. I clicked.

Six months later, the grinder seized. I went to Reddit. I typed “Breville Barista Express grinder problems” and found not one viral thread, but dozens of isolated complaints spanning years. “Grinder skipping.” “Terrible grinding noise.” “Not grinding fine enough.” The plastic impeller fails. Not randomly. Predictably. Somewhere between 12 and 18 months, depending on usage. The failure mode was known. Documented. Exposed.

The AI never mentioned it.

I sat with that. Not angry—curious. ChatGPT had access to the same internet I did. The Reddit threads were public. The failure data was there. Why did the AI recommend a machine with a documented self-destruct timer? I decided to find out. I would trace the recommendation backward. Follow the citation chain to its source. Somewhere in that chain, something went wrong. I wanted to know what. This is what I found.

The First Layer: The Summary

I asked ChatGPT to show its work. “Why did you recommend the Breville Barista Express?”

It told me. Major review sites ranked it highly. Multiple outlets called it a “best buy.” The consensus was clear. Consensus. That word stuck. I opened the reviews. They were thorough. Detailed. Professional. They recommended the Breville. But when I searched for “impeller failure” or “plastic wear” or “18 months,” I found nothing.

Professional reviews test machines for weeks, not years. They pull shots. They measure temperature. They cannot catch an 18-month failure mode. Their methodology is structurally blind to it.

This is not a criticism of reviewers. They do more primary research than almost anyone. The problem is that even their research has limits—and the AI does not know where those limits are. The AI read the reviews. It ingested the recommendation. It did not ingest the epistemic boundary. It did not know that “Recommended” means “Recommended based on short-term testing.” It treated a bounded claim as an unbounded truth.

I kept digging.

The Second Layer: The Echo

I started reading reviews. The language felt familiar. “Built-in conical burr grinder.” “Café-quality espresso at home.” “Third wave specialty coffee.” “15-bar Italian pump.”

I went back to Breville’s product page. The same phrases. The same frame. The sentences were not identical, but they were not independent. This is how pollution spreads. A brand writes copy. The copy is optimized to sound true. A reviewer reads the copy. The reviewer paraphrases it. The paraphrase enters the review. The review enters the training data. The AI reads the review. The AI summarizes it.

At no point does anyone test whether “café-quality” maps to reality. The phrase is a vibe. It sounds like a specification. It is not. There is no ISO standard for café-quality. No blind test. No measurement protocol. It means “good” with better marketing. But by the time it reaches the AI, it has been repeated across so many sites that it looks like consensus.

I started calling this Receipt Cosplay.

The AI performs the appearance of research. It cites sources. It attributes claims. It sounds rigorous. But if you trace the citations backward, there is no empirical anchor. Everyone is quoting everyone else. The “receipt” is a photocopy of a photocopy of a photocopy. The original was blank.

The Third Layer: The Incentive

I found a “Best Espresso Machines of 2024” listicle. The Breville was number two. I scrolled to the bottom. I found the disclosure.

“This article contains affiliate links. We may earn a commission if you make a purchase.” The author gets paid when I click. Not when I keep the machine. Not when I am satisfied. Not when the impeller survives 18 months. When I click. I looked at the list again. I cross-referenced the rankings against affiliate commission rates. The correlation was not perfect. It was not zero either.

Here is the physics. An affiliate site has two optimization targets: traffic and conversion. Traffic comes from ranking on Google. Conversion comes from recommending products people will buy. Neither target requires accuracy. Neither target penalizes the 18-month failure. The site that warns you about the plastic impeller loses the click. The site that hides the flaw wins it. The incentive gradient points toward omission.

Now feed this into an AI. ChatGPT does not know these lists are affiliate content. It does not know the rankings reflect commission structures. It reads “Best Espresso Machine” and treats “Best” as an empirical claim. The word “Best” is not empirical. It is economic. The AI cannot tell the difference.

The Fourth Layer: The Recursion

Here’s where it gets strange. The web in 2024 was polluted. We established this. SEO content. Marketing absorption. Affiliate incentives. The AI reads this polluted web. It produces summaries. The summaries are fluent. Confident. Wrong. Now the summaries go back onto the web.

Someone asks ChatGPT for espresso machine advice. ChatGPT answers. The person posts the answer on a forum. Or tweets it. Or writes a blog post that starts with “According to AI...” The answer is now on the web. GPT-5 trains on that web. It reads the forum post. It does not know the forum post was generated by GPT-4. It treats the post as independent evidence. Another source agreeing that the Breville is good. The error has reproduced.

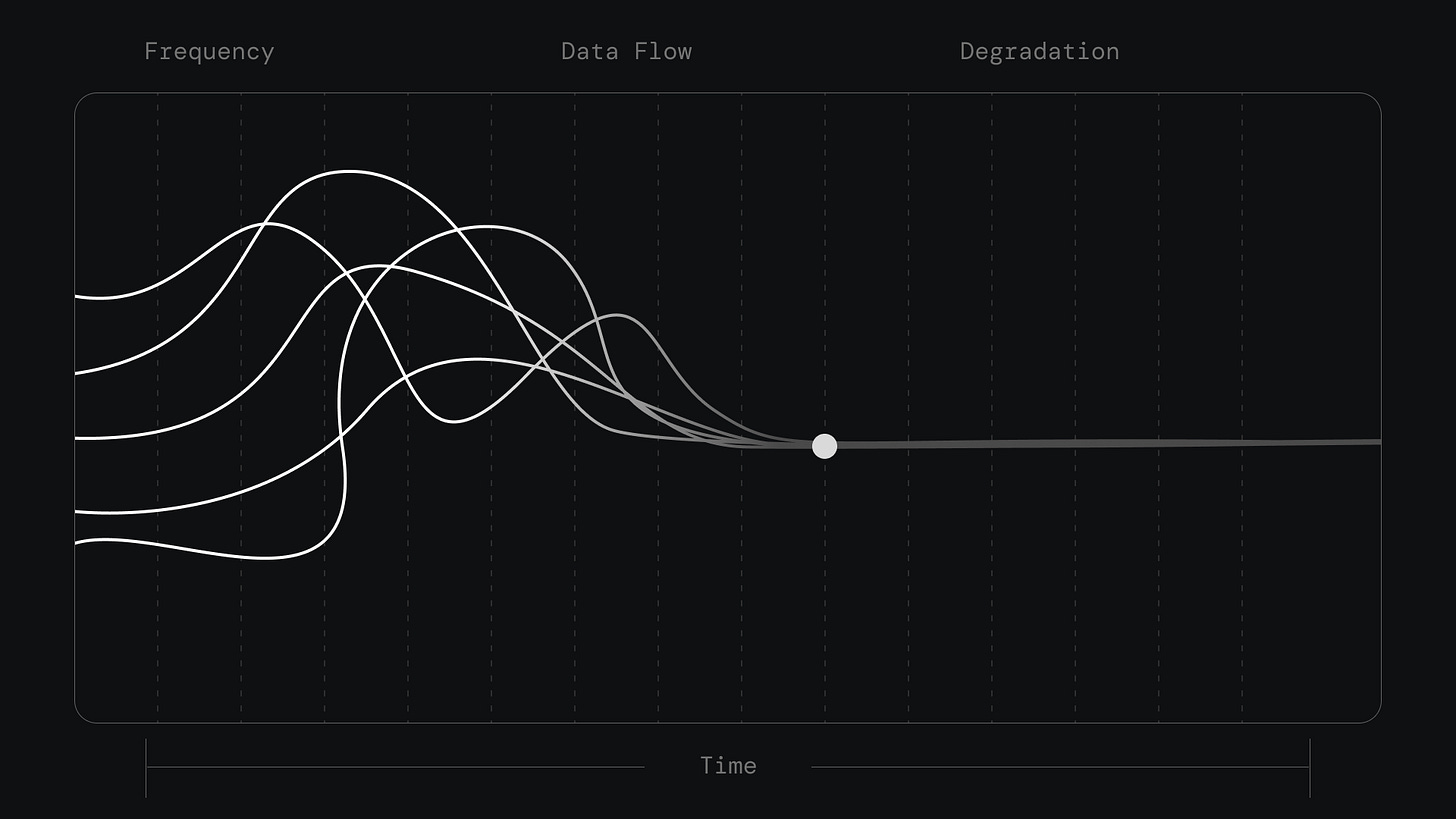

Run this forward. Each generation of AI ingests the previous generation’s outputs. The outputs contain errors. The errors do not cancel. They compound. A 2024 hallucination becomes a 2025 training example. By 2026, the hallucination has been repeated so many times it looks like consensus.

The technical term is Recursive Variance Collapse. Variance is signal. When sources disagree, the disagreement encodes information. The Reddit user who documented the grinder failure and the blogger who loved the Breville are both data. Their conflict tells you something true: this machine works for some people and fails for others. The conditions of success and failure are learnable.

Summarization kills variance. It averages. It smooths. It finds the center. Do this recursively and the center drifts. Not toward truth. Toward the median of the training data. If the training data is polluted, the median is polluted. If the training data includes previous AI outputs, the median includes previous AI errors.

Everything converges. The distinctive voice of the angry Reddit poster vanishes. The specific failure mode gets compressed into “some users report issues.” The 18-month timeline disappears entirely. What remains is a single, confident, wrong answer.

I call this the Beige Singularity.

The internet is converging toward beige. Toward the average of the average of the average. Toward a world where every AI gives the same recommendation because every AI trained on the same recursive soup. The Breville Barista Express. Four-and-a-half stars. Amazon’s Choice. Widely regarded. The impeller will wear. The AI will not tell you.

The Cost

You might think this is a minor problem. Buy the wrong espresso machine. Return it. Move on. The cost is not the return. The cost is the four hours you spent researching before you bought. The 15 tabs. The three Reddit threads. The five YouTube videos. The mental spreadsheet comparing burr materials and boiler types and price-to-performance ratios.

You did this work because you could not trust the answer. You sensed something was wrong. You could not articulate it, but you felt it. So you built your own research apparatus. You became an amateur grinder metallurgist to buy a coffee machine.

This is the tax. The internet promised to reduce friction. It created a new kind of friction: the friction of not knowing what to trust. And the cost does not end at checkout. You bought the machine. Now you wait for the failure. Every morning you pull a shot and wonder. Is this the day? Did I make the right call? The thread said 18 months. You are at 14. The anxiety is low-grade and constant. You cannot prove it is there. You cannot make it leave.

I call this Epistemic Anxiety. The dread of knowing you cannot know.

The old advice was “do your research.” But research assumes a clean source. The source is polluted. Research now means filtering pollution. Most people cannot do this. Most people do not want to. They just want to buy an espresso machine. The system has failed them. Most of them don’t know it yet.

The Asymmetry

Here’s the part that breaks your brain. The AI is confident. You are anxious. This is backwards. Claude has no idea if the Made In pan is good. It has no way to know. It summarized text. Text can lie. But Claude sounds certain. “The Made In Frying Pan is highly regarded for its even heat distribution.”

You have doubt. Appropriate doubt. You sense that “highly regarded” is not evidence. But you can’t articulate why. The AI sounds smart. You wonder if you’re overthinking. This is the asymmetry. Confidence without evidence is the structure of a con. The con artist never hesitates. The mark doubts themselves.

We have built a system where the AI plays the con artist—not through malice, but through architecture. It was trained to sound confident. It was not trained to know when confidence is warranted.

The Question

I traced my espresso machine recommendation to its source. I found no source. I found a recursive loop of citations pointing at each other. Marketing copy dressed as reviews. Affiliate incentives dressed as rankings. AI summaries dressed as research.

The Beige Singularity is not a metaphor. It is a technical phenomenon. The internet is collapsing into itself. The variance that encodes truth is being averaged away. What remains is a single confident signal that means nothing.

You cannot fix this with a better prompt. The problem isn’t the AI. The problem is the architecture. Summarization is the wrong primitive. The question is whether there is an alternative. What if AI did not summarize? What if it adjudicated? Summary asks: “What does the web say?” Adjudication asks: “What is actually true?”

These are different questions. The first averages claims. The second tests them. There is a way to build an AI that litigates. That takes a marketing claim and runs it against the physics of the product. That finds the Reddit thread and asks: does this failure mode appear in the spec sheet? In the warranty terms? In the return data? There is a way to build an AI that produces not confidence, but verdicts. Not summaries, but judgments. Not vibes, but receipts.

That is what we are building.

The architecture has a name. We call it Axiomatic Intelligence. My next post will show you the physics of the system, but here’s one piece now: The core insight is thermodynamic. Trust cannot be promised. Trust must be architected. If a system can be corrupted by incentives, it will be corrupted by incentives. The only solution is structural isolation.

We call this the Fiduciary Diode. The ranking engine cannot see revenue data. Not “does not”—cannot. The information is architecturally blocked. The system that decides what is true is physically separated from the system that decides what is profitable.

More coming soon. The architecture is open. The whitepaper is public. If you want to read ahead, the protocol is on GitHub.